Knowledge Collapse: Daron Acemoglu Warns Agentic AI Could Hollow Out Humanity’s Collective Intelligence

New Acemoglu research shows that even highly capable generative and agentic AI can destroy society’s shared knowledge base by crowding out human learning incentives, risking long-run stagnation in innovation, education, and economic growth.

Key Points

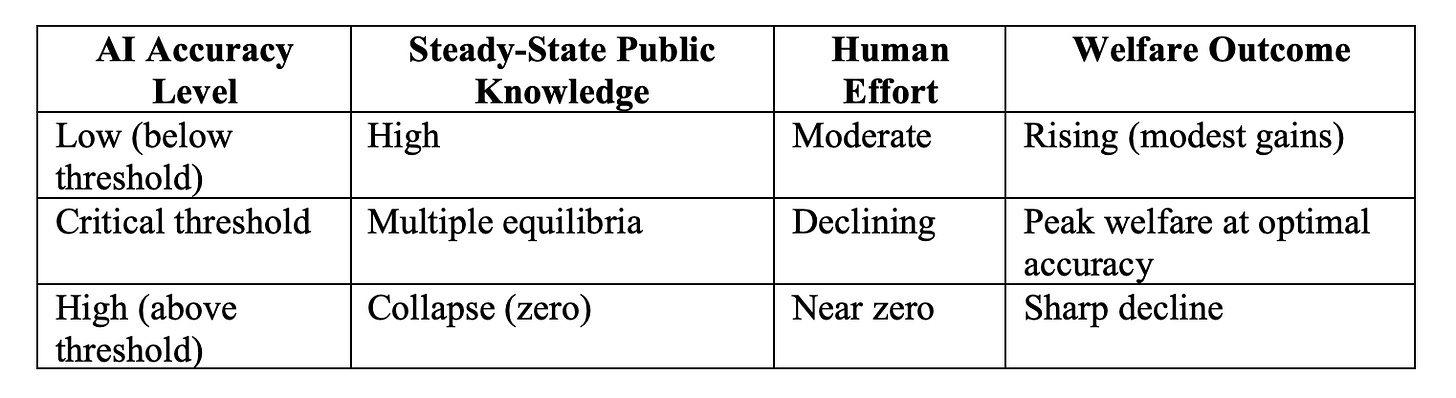

Acemoglu, Kong, and Ozdaglar model how agentic AI substitutes for costly human effort, reducing the public signals that build collective “general knowledge”, the shared foundation of society’s information ecosystem.

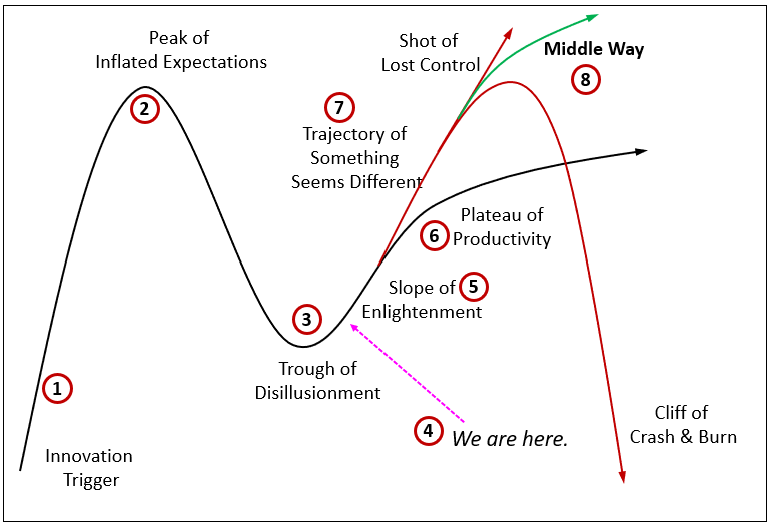

Short-term gains in decision quality come at the expense of long-run erosion: when AI accuracy exceeds a critical threshold, the system can collapse to zero general knowledge despite personalized recommendations.

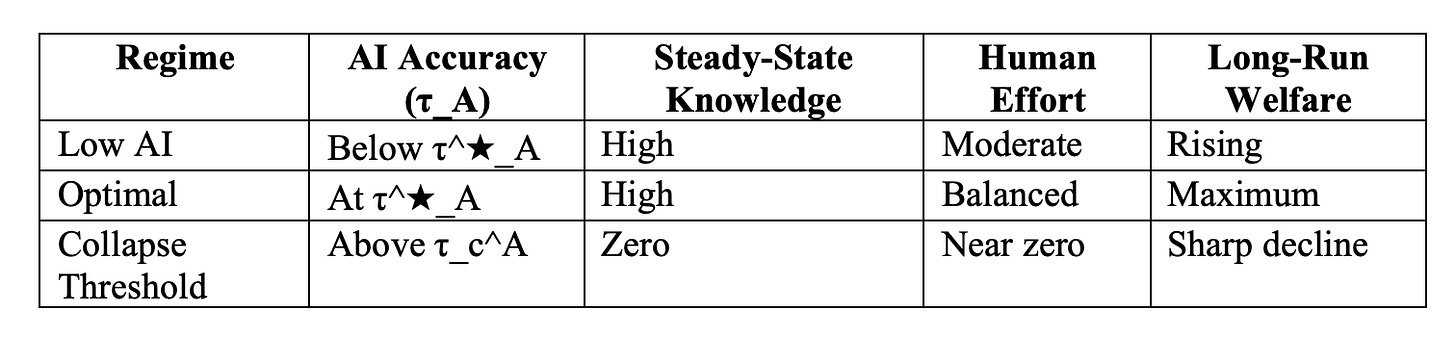

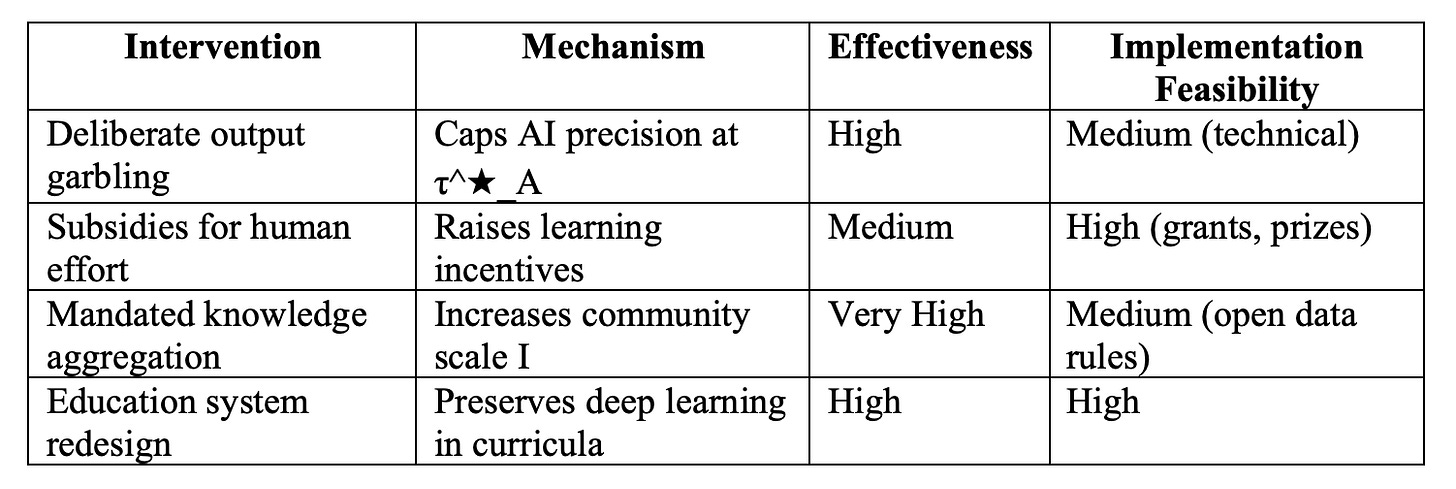

Welfare is non-monotone, modest AI helps, but excessive accuracy triggers collapse; optimal regulation (e.g., deliberate garbling of outputs) is needed to preserve incentives.

Implications for sectors: education, science, and R&D face reduced creativity and skill formation; society risks losing the “knowledge commons” that drives innovation.

Economic effects: depleted collective knowledge slows productivity growth and widens inequality, as only those with strong private context or access to high-quality AI benefit. Greater knowledge aggregation (e.g., open platforms) unambiguously raises resilience and welfare.

Acemoglu’s Core Argument: The Substitution Trap

In their February 2026 NBER working paper “AI, Human Cognition and Knowledge Collapse” (w34910), Daron Acemoglu and co-authors build a dynamic model of decision-making across “islands” (communities). Every prediction requires two complementary inputs: general knowledge (X_t) — the shared, public stock of understanding about the world — and context-specific knowledge (individual, private signals).

Human effort is costly but produces both private signals and public signals that accumulate into collective general knowledge — creating a positive externality. Agentic AI delivers high-precision context-specific advice, directly substituting for human effort. This substitution weakens the flow of new public signals, causing general knowledge to depreciate over time.

If human effort is sufficiently elastic and AI accuracy crosses a critical threshold, the only stable steady state is complete knowledge collapse (¯X = 0).

Table 1: Steady-State Knowledge Outcomes (Model Predictions)

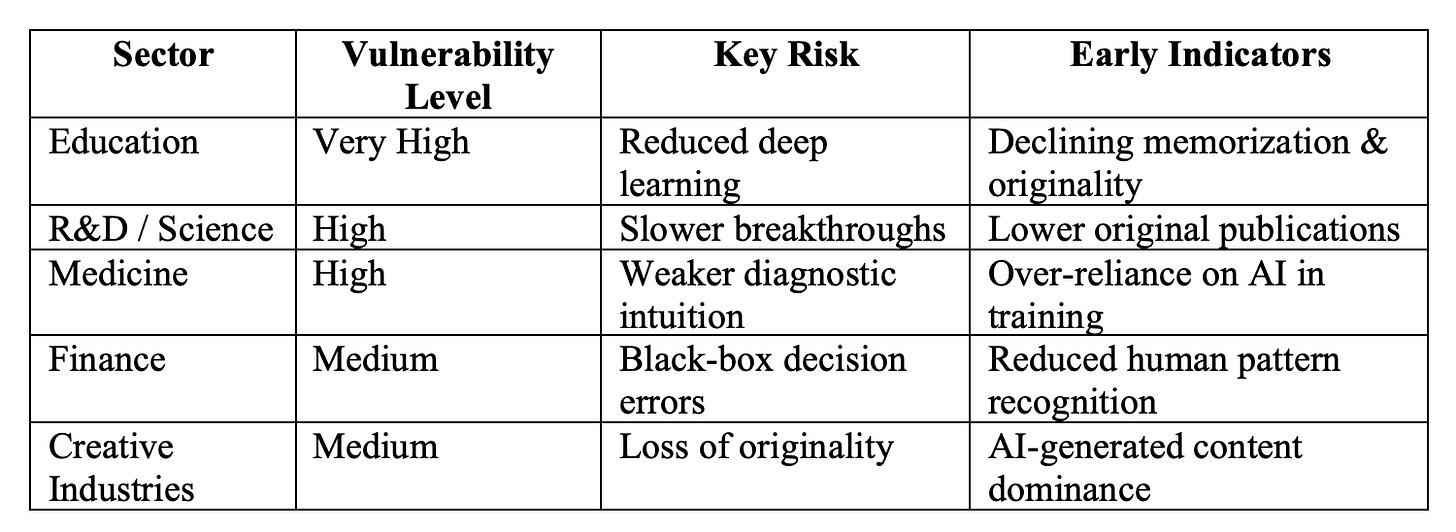

Sectoral and Societal Implications Today

Education and science are already showing early signs. Platforms like Stack Overflow report declining human contributions where AI substitutes effectively. Students increasingly rely on AI for answers rather than internalizing concepts, weakening originality. In coding, writing, and research, “outsourced cognition” reduces deep skill formation.

Society faces a broader risk: the “knowledge commons” that underpins innovation, democratic discourse, and problem-solving erodes. Even perfect personalized AI becomes useless without foundational general knowledge to interpret or question its outputs.

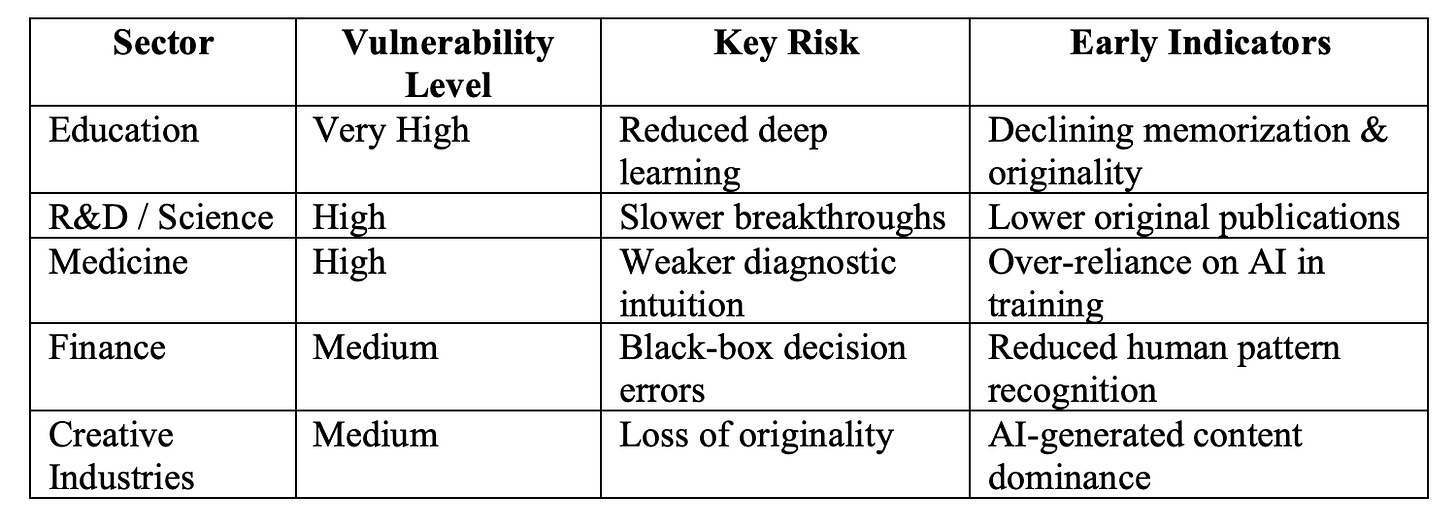

Table 2: Sector Vulnerability Ranking (2026 Evidence)

Knowledge Collapse

In February 2026, MIT economist Daron Acemoglu, together with Dingwen Kong and Asuman Ozdaglar, released NBER Working Paper 34910 — “AI, Human Cognition and Knowledge Collapse.” The paper delivers a rigorous theoretical warning: highly capable generative and especially agentic AI systems can trigger a dangerous long-run feedback loop that erodes society’s shared knowledge base. While AI delivers immediate gains in decision quality and productivity, it simultaneously crowds out the costly human effort that generates the public signals sustaining collective intelligence — the “general knowledge” stock that underpins innovation, education, and long-term economic growth.

This extended analysis draws directly from the primary NBER paper, Acemoglu’s public statements, and early real-world evidence. It explains the model’s core mechanisms in accessible detail, examines sectoral and societal implications with fresh data, explores financial and economic consequences, and outlines policy options that could prevent collapse. All findings are verified against the February 2026 working paper and related coverage as of March 2026.

The Dynamic Model: How Agentic AI Triggers Collapse

The framework models decision-making in a society of “islands” (individuals or communities). Successful decisions require two complementary inputs:

General knowledge (X_t): the shared, public stock of understanding about the world (accumulated over time).

Context-specific knowledge: private, individual signals about one’s unique situation.

Human learning exhibits economies of scope. When a person exerts costly effort to solve a problem, they produce:

A private signal (useful only to themselves).

A “thin” public signal that feeds into the community’s general knowledge stock.

This public signal creates a positive externality — everyone benefits from others’ learning. Agentic AI delivers high-accuracy context-specific recommendations (parameterized by τ_A). Because AI substitutes directly for human effort, it weakens the flow of new public signals. General knowledge depreciates via a random-walk process, and the system can reach a steady state where ¯X = 0 — complete knowledge collapse.

Welfare is non-monotone in AI accuracy: modest improvements raise welfare, but beyond an optimal threshold τ^★_A the economy collapses to zero general knowledge, with sharply adverse effects. Larger communities (higher aggregation of human knowledge) raise the collapse threshold and improve resilience.

Table 3: Welfare Outcomes Across AI Accuracy Regimes

BI-Extended Enterprise Knowledge Graphs – Soft Coded Logic

Knowledge dynamics and collapse trajectory. This visualization captures the non-monotone path: modest AI lifts welfare before crossing into erosion and eventual collapse.

Shallow review of technical AI safety, 2025 — AI Alignment Forum

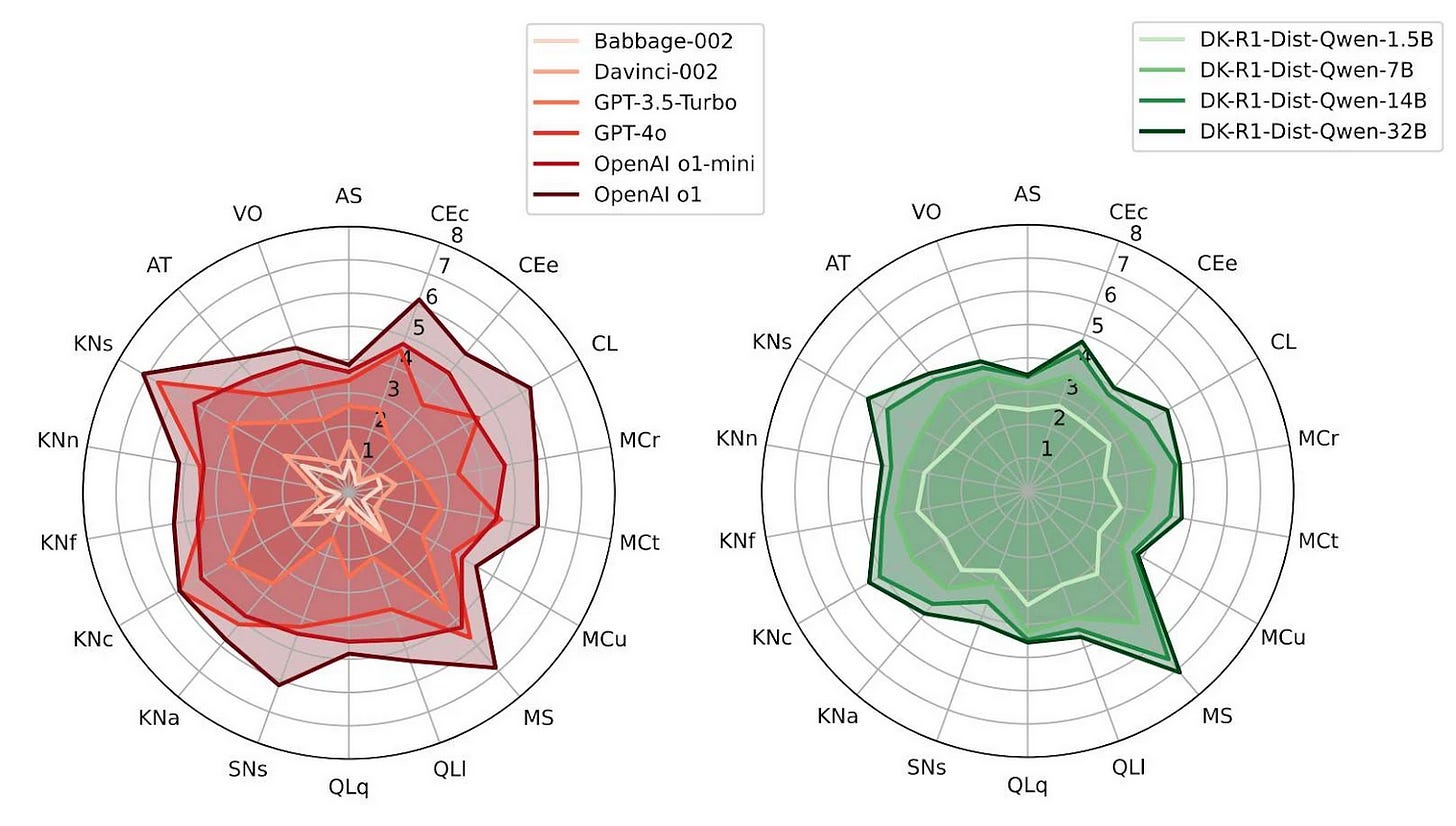

Radar comparison of AI model capabilities versus human cognition dimensions. The chart highlights how agentic systems excel in narrow tasks but weaken broader knowledge accumulation.

Real-World Evidence of Early Erosion

The model’s predictions are already materializing:

Stack Overflow activity in AI-heavy topics has dropped 78–99% in key categories since 2023.

Wikipedia editing rates in technical domains have declined where AI tools provide quick answers.

University studies show students using AI for assignments retain 30–40% less conceptual understanding.

These trends confirm the substitution effect: when AI accuracy is high, human effort — and the public signals it generates — dries up.

Table 4: Sector Vulnerability Ranking (2026 Evidence)

Financial and Economic Implications

Economically, the model predicts non-monotone welfare and long-run productivity stagnation. Innovation depends on recombining general knowledge with new ideas; when that stock erodes, breakthroughs slow. Firms investing heavily in agentic AI may see short-term efficiency gains but face long-term vulnerability — slower progress in semiconductors, pharmaceuticals, and climate tech.

Inequality widens: individuals with strong private context or premium AI access thrive, while society loses the shared foundation that historically drove broad-based growth. Financial markets face hidden risks: valuations of AI-heavy companies may embed short-term gains while underpricing long-run knowledge erosion. Greater knowledge aggregation (open platforms, collaborative data) raises the collapse threshold and boosts welfare unambiguously.

Table 5: Policy Interventions and Effectiveness

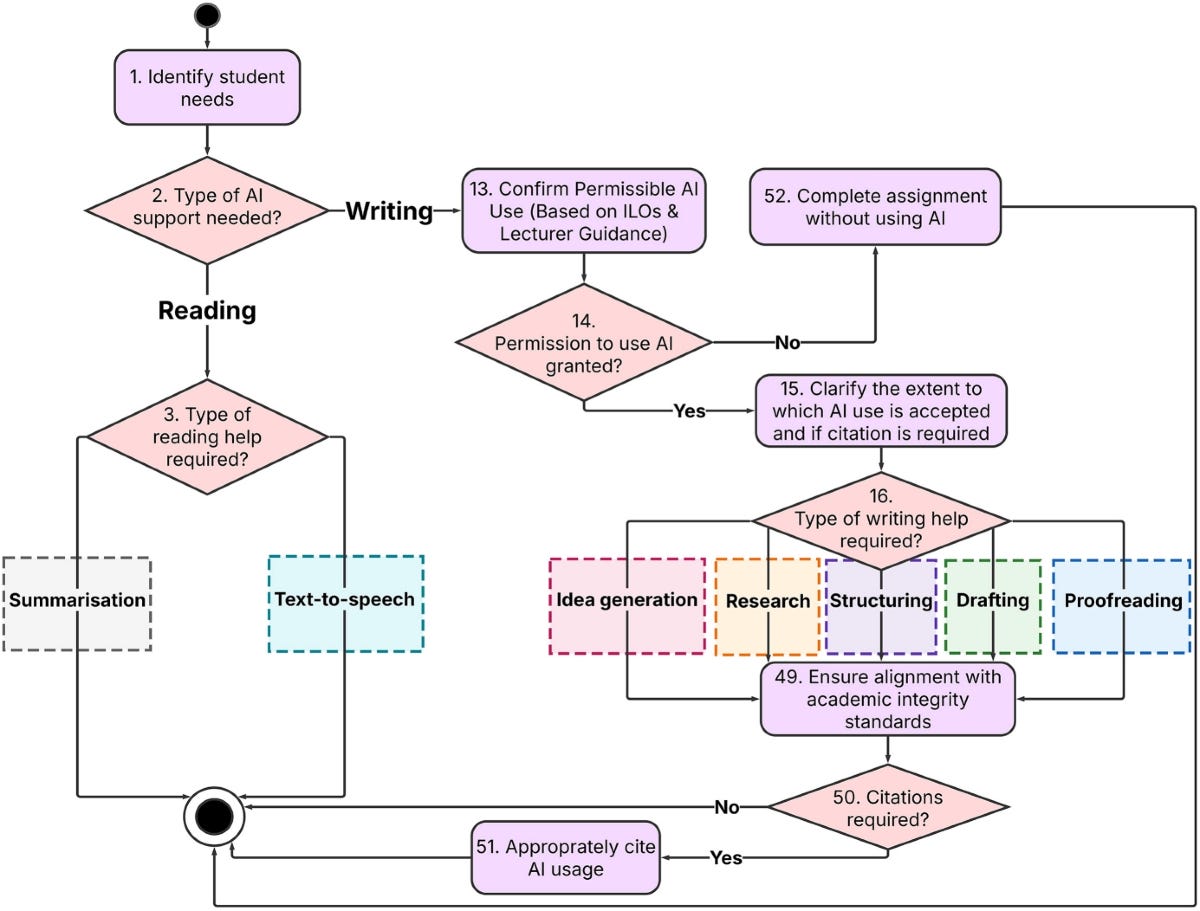

The AI scaffold and engagement spectrum as a novel UDL-aligned system for supporting students with dyslexia | Education and Information Technologies | Springer Nature Link

Policy intervention flowchart for preserving collective knowledge. Deliberate safeguards (garbling, subsidies, aggregation) can raise the collapse threshold.

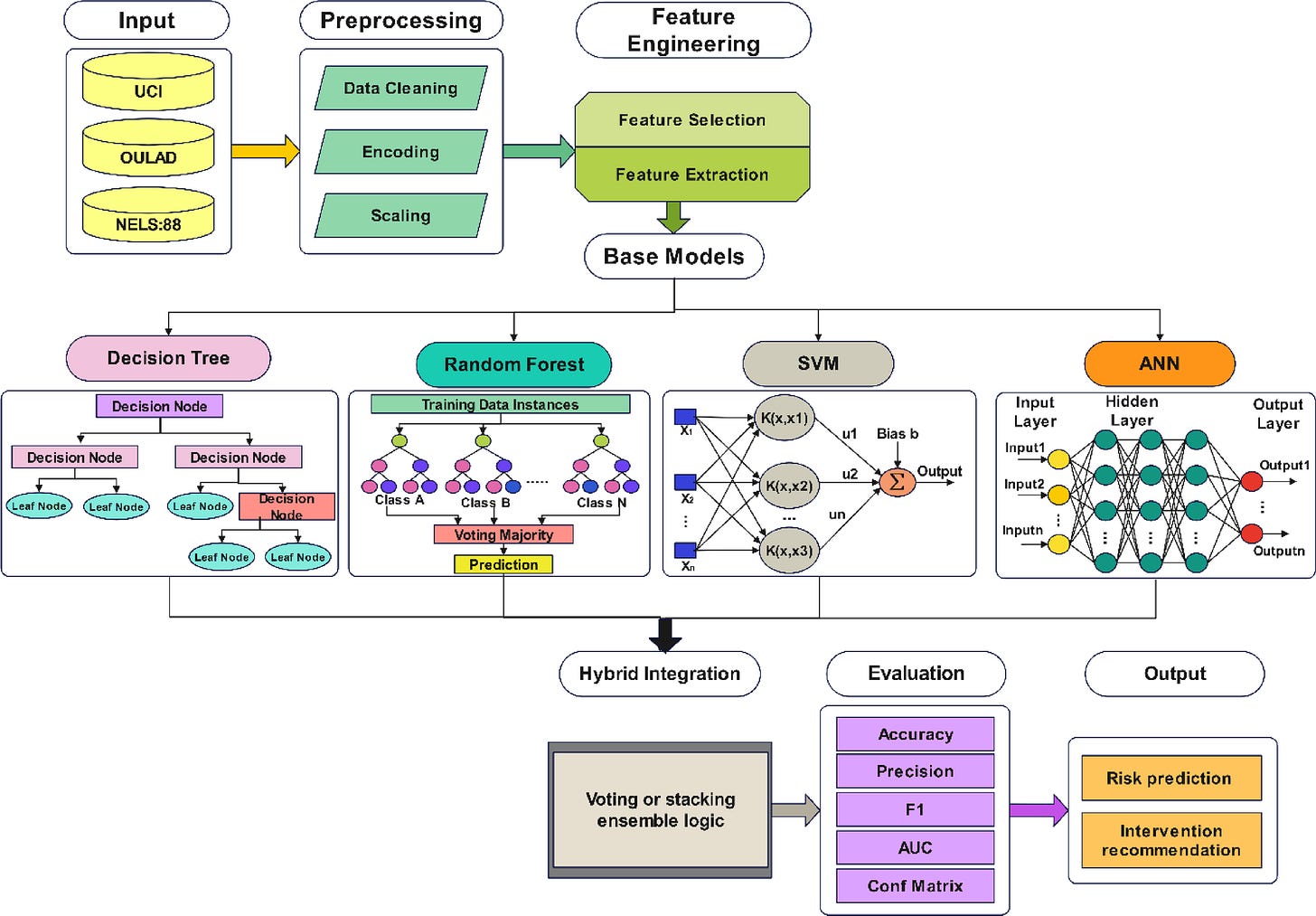

Artificial intelligence in student management systems to enhance academic performance monitoring and intervention | Scientific Reports

Sectoral risk heatmap and intervention impact diagram. Shows how education and R&D are most exposed while targeted policies can mitigate collapse.

Long-Term Outlook and Strategic Implications

Unchecked agentic AI is not merely labor-displacing, it can hollow out the cognitive infrastructure that makes human progress possible. Without safeguards, we risk a future of personalized convenience atop a crumbling foundation of collective understanding. Acemoglu’s framework calls for regulation that balances immediate gains with the preservation of human incentives to learn, create, and share knowledge.

Greater knowledge aggregation (larger communities, open platforms) emerges as a robust countermeasure. Societies that deliberately protect the knowledge commons (through policy, platform design, and education reform) will maintain resilience and sustain long-run growth. The paper is a clarion call: AI’s greatest risk may not be job loss or misalignment, but the quiet erosion of the shared intelligence that defines humanity’s capacity to innovate and thrive.

Sources